4 Newton Methods

First-order methods like gradient descent use only gradient information and converge at best linearly (or sublinearly for nonsmooth problems). When the objective is smooth and we can afford to compute second-order information — the Hessian — we can do dramatically better. Newton’s method leverages the local curvature of the objective to take steps that are adapted to the geometry of the problem, achieving quadratic convergence near the optimum.

This chapter develops the theory and practice of Newton’s method for unconstrained optimization. The central insight is:

Newton’s method for minimizing f is Newton–Raphson applied to the optimality condition \nabla f(x) = 0.

This means the convergence theory of Newton–Raphson for root-finding transfers directly to Newton’s method for optimization. We develop both perspectives in parallel: starting from Newton–Raphson for root-finding, understanding the various convergence behaviors it can exhibit (convergence, cycling, and divergence), and then establishing a rigorous convergence analysis that reveals a striking two-phase structure — a “damped” phase with constant progress followed by a “pure” phase with double-exponential convergence.

TipCompanion Notebook

4.1 Setting and Iterative Algorithms

We consider the unconstrained optimization problem

\min_{x \in \mathbb{R}^n} f(x),

where f is convex, twice continuously differentiable, with open domain D = \operatorname{dom}(f) \subseteq \mathbb{R}^n. We assume p^* = \min_x f(x) is finite and attained by some x^*.

Since f is convex and differentiable, the first-order optimality condition gives

\bar{x} \in \operatorname{argmin} f(x) \iff \nabla f(\bar{x}) = 0.

This equivalence is the starting point for Newton’s method: minimizing f reduces to solving the system of n equations \nabla f(x) = 0.

Iterative approach. We solve this problem by generating a sequence of iterates x^0, x^1, x^2, \ldots that (hopefully) converge to x^*. The most common template is

x^{k+1} = x^k + \alpha^k \cdot d^k,

where \alpha^k > 0 is the stepsize and d^k is the descent direction. The choice of d^k is what distinguishes different algorithms: gradient descent uses d^k = -\nabla f(x^k) (first-order information only), while Newton’s method uses d^k = -[\nabla^2 f(x^k)]^{-1}\nabla f(x^k) (second-order information via the Hessian).

Definition 4.1 (Descent Direction) A vector d \in \mathbb{R}^n is a descent direction at x if f(x + \alpha d) < f(x) for all sufficiently small \alpha > 0. A sufficient condition is \nabla f(x)^\top d < 0 (the direction has a negative inner product with the gradient).

4.2 Newton’s Method

Gradient descent uses only the first-order Taylor approximation to choose a descent direction. Newton’s method goes one step further: it builds a quadratic (second-order) approximation of f at the current iterate and jumps to its minimizer. This requires the Hessian \nabla^2 f(x), but in return delivers much faster convergence.

Assumption: f is strictly convex and twice continuously differentiable, so \nabla^2 f(x) \succ 0 for all x \in D.

4.2.1 Two equivalent viewpoints

Newton’s method admits two complementary interpretations that lead to the same update rule:

Viewpoint 1: Minimize a quadratic model of f. Approximate f at x by its second-order Taylor expansion:

\widehat{f}_x(y) = f(x) + \langle \nabla f(x),\, y - x \rangle + \tfrac{1}{2}(y - x)^\top \nabla^2 f(x)\,(y - x).

When \nabla^2 f(x) \succ 0, this is a strictly convex quadratic with minimizer y^* = x - [\nabla^2 f(x)]^{-1}\nabla f(x).

Viewpoint 2: Apply Newton–Raphson to \nabla f(x) = 0. Since \bar{x} minimizes f iff \nabla f(\bar{x}) = 0, we can apply the Newton–Raphson root-finding method (see Section 4.3 below) to the equation F(x) = \nabla f(x) = 0. The Jacobian of F is J_F = \nabla^2 f, so the Newton–Raphson update is x^+ = x - [\nabla^2 f(x)]^{-1}\nabla f(x).

Both viewpoints yield the same Newton update:

x^{k+1} = x^k - \bigl[\nabla^2 f(x^k)\bigr]^{-1} \nabla f(x^k).

The equivalence is no coincidence: minimizing the quadratic model sets \nabla \widehat{f}_x(y) = 0, which is exactly the linearization of \nabla f = 0 used by Newton–Raphson. This dual perspective is powerful: Viewpoint 1 provides geometric intuition (fit a parabola and jump to its bottom), while Viewpoint 2 transfers the convergence theory of Newton–Raphson directly to optimization — every convergence result, pathology, and condition we establish for Newton–Raphson immediately applies to Newton’s method.

Is the Newton direction a descent direction? Yes, whenever \nabla^2 f(x) \succ 0:

\nabla f(x)^\top d = -\nabla f(x)^\top \bigl[\nabla^2 f(x)\bigr]^{-1} \nabla f(x) < 0.

TipRemark: Quadratic Functions

If f is quadratic, i.e., f(x) = x^\top P x + q^\top x + r with P \succ 0, Newton’s method converges in one iteration — the quadratic model is exact.

How to read Figure 4.2. The blue curve is the true objective f(x), with the current iterate x_k marked in terracotta. The gold dashed parabola is the local quadratic model \widehat{f}_{x_k}, which matches f at x_k in both value and slope (the green dashed tangent line). The Newton step d_{\mathrm{nt}} (terracotta arrow) jumps from x_k to x_{k+1}, the minimizer of this quadratic model. The true minimizer x^* lies further right — Newton’s method approaches it iteratively.

NoteInteractive Demo: Newton’s Method for Minimization

Drag the slider to change the starting point and watch Newton’s method converge. The black curve is f(x) = x\log x - 2x (minimizer at x^* = e \approx 2.718). Dashed curves are the quadratic models at each iterate.

4.3 Newton–Raphson for Root-Finding

Since Newton’s method for optimization is Newton–Raphson applied to \nabla f = 0 (Viewpoint 2 above), we now develop Newton–Raphson for general root-finding, and the convergence theory will carry over to optimization.

The Newton–Raphson method solves nonlinear equations F(x) = 0, where F\colon \mathbb{R}^n \to \mathbb{R}^n, by iterating

x^{k+1} = x^k - \bigl(J_F(x^k)\bigr)^{-1} F(x^k),

where J_F is the Jacobian of F. The idea is to linearize F at x^k via F(x^k) + J_F(x^k)(x - x^k) = 0 and solve the linear system. In the univariate case, this reduces to x^{k+1} = x^k - F(x^k)/F'(x^k).

Example 4.1 (Computing Square Roots) To find \sqrt{a}, set F(x) = x^2 - a. Newton–Raphson gives the classical iteration x^{k+1} = \frac{1}{2}(x^k + a/x^k).

Geometric meaning (F\colon \mathbb{R} \to \mathbb{R}):

How to read Figure 4.3. The blue parabola is F(x) with roots x_1^* and x_2^* (lavender dots on the x-axis). Starting from x^k (terracotta), the green line is the tangent to F at that point. The next iterate x^{k+1} (green dot) is where this tangent crosses zero. Each iteration replaces the curve with its tangent approximation and solves the simpler linear problem.

The tangent line at (x^k, F(x^k)) is:

y - F(x^k) = F'(x^k)\,(x - x^k).

Setting y = 0 gives:

x = x^k - \bigl(F'(x^k)\bigr)^{-1} F(x^k).

NoteInteractive Demo: Newton–Raphson for Root-Finding

Solve F(x) = \log(x) - 1 = 0 (root at x^* = e). The tangent line at each iterate crosses zero to produce the next iterate.

4.4 Behaviors of Newton–Raphson

Before establishing convergence guarantees, it is instructive to see what can go wrong. Newton–Raphson can exhibit three qualitatively different behaviors depending on the initialization and the structure of F.

Convergence (with sensitivity to initialization). For F(x) = x^2 - 9, if x_0 > 0 then x_k \to 3; if x_0 < 0 then x_k \to -3; if x_0 = 0 then F'(x_0) = 0 and the method cannot proceed. The root Newton–Raphson converges to depends on the starting point.

When F has multiple roots, the sensitivity to initialization can be extreme. Consider F(x) = x^3 - 2x^2 - 11x + 12 with roots at x = -3, 1, 4. Five initial points differing by less than 10^{-4} — namely x_0 \in \{2.352875, 2.352842, 2.352837, 2.352836327, 2.352836323\} — converge to three different roots.

NoteInteractive Demo: Sensitivity to Initialization

Part 1: Explore freely. Drag the slider to see which root of F(x) = (x+3)(x-1)(x-4) Newton–Raphson converges to. The left plot shows iterates on the function; the right plot shows the iteration trajectory x_k vs k.

Part 2: Five starting points within 10^{-4} of each other. The table below shows that five initial points, all near x_0 \approx 2.3528, converge to three different roots. Select any row to see its full iteration trajectory.

Cycling. For F(x) = x^3 - 2x + 2 with x_0 = 0: F'(x) = 3x^2 - 2, giving x_1 = 1, x_2 = 0, and the method cycles with period 2. Cycles of arbitrary period can be constructed; see this discussion for period-3 examples.

NoteInteractive Demo: Cycling Behavior

Newton–Raphson applied to F(x) = x^3 - 2x + 2. Start at x_0 = 0 to see period-2 cycling; try other starting points to see convergence or more complex behavior.

Divergence. For F(x) = x^{1/3}: the update x' = x - 3x = -2x gives |x'| > |x| — the iterates diverge. Even for convex objectives, Newton’s method can diverge: \min_x f(x) = x\log x + x gives F(x) = \log x + 2, and starting from x_0 = 1 yields x_1 = -1, which is outside the domain.

NoteInteractive Demo: Divergence

For F(x) = x^{1/3}, the Newton step gives x' = x - x^{1/3}\big/\bigl(\tfrac{1}{3}x^{-2/3}\bigr) = -2x. Each iterate doubles in magnitude and flips sign.

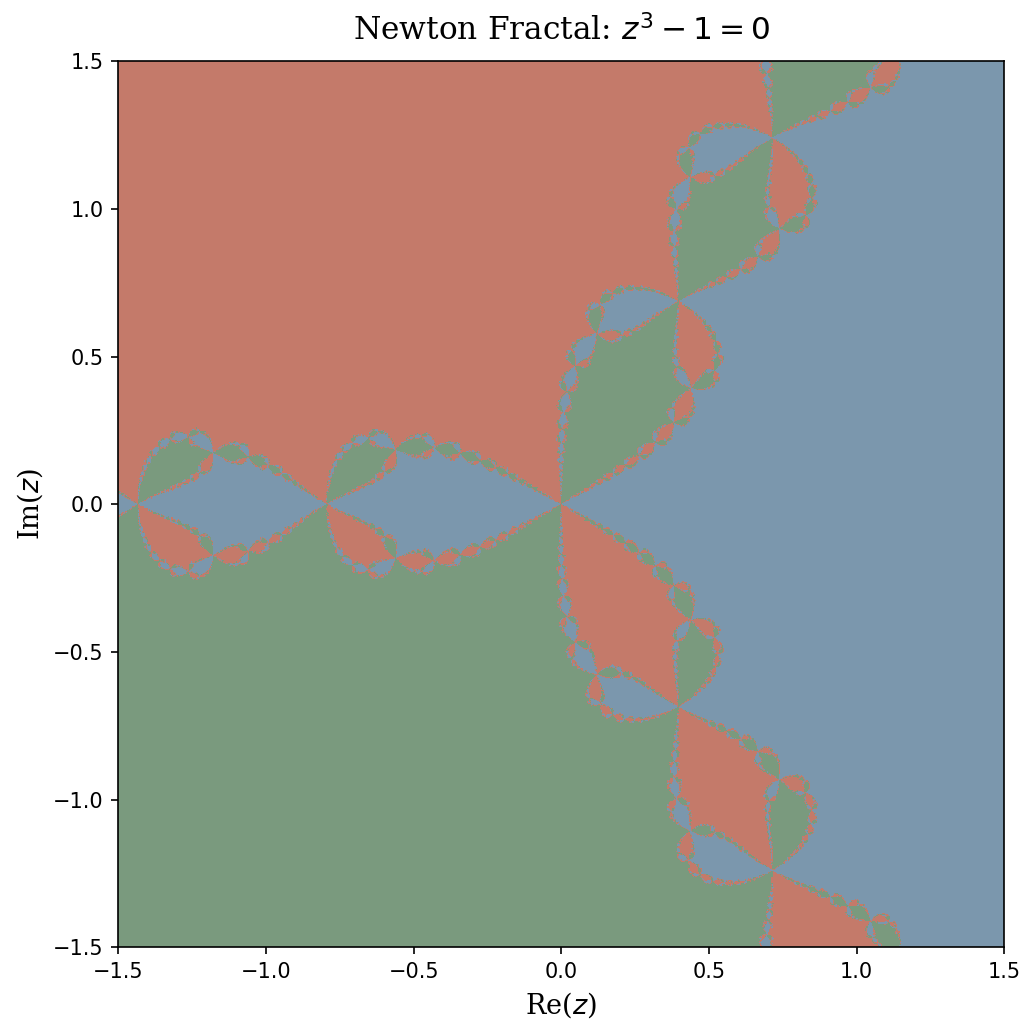

4.4.1 Newton Fractals

The sensitivity to initialization is not merely a nuisance — it has deep mathematical structure. Consider applying Newton–Raphson to F(z) = z^3 - 1 = 0 in the complex plane, where the three roots are the cube roots of unity: 1, e^{2\pi i/3}, and e^{4\pi i/3}.

For each starting point z_0 = a + bi, we run Newton–Raphson and record which of the three roots the iterates converge to. Coloring each pixel by its limiting root produces the Newton fractal (Figure 4.4).

How to read the figure. Each point in the complex plane represents a starting value z_0. The three colors correspond to the three cube roots of unity. If a pixel is colored blue, then Newton–Raphson initialized at that z_0 converges to the root 1; green means convergence to e^{2\pi i/3}; and gold means convergence to e^{4\pi i/3}. The large monochromatic regions are basins of attraction — open sets of initial conditions that all converge to the same root. The intricate boundaries between these regions are where the interesting behavior lies.

The key conclusion. Along the fractal boundary, the three basins interleave at every scale. This means: for any starting point z_0 on the boundary, and any \varepsilon > 0 no matter how small, the ball B(z_0, \varepsilon) contains points that converge to all three different roots. In other words, an infinitesimal perturbation to the initialization can send Newton–Raphson to a completely different solution. This is not a rare pathology — the fractal boundary has positive measure in many Newton fractals, and the phenomenon occurs for any polynomial of degree \geq 2 with distinct roots.

NoteInteractive Demo: Newton Fractal Explorer

Choose the polynomial z^n - 1 and zoom level. Each pixel is colored by which n-th root of unity Newton–Raphson converges to. Darker shading means more iterations were needed. Zoom in to see the self-similar fractal boundary.

TipFurther Reading: Newton Fractals

- 3Blue1Brown: Newton’s Fractal — an excellent visual introduction

- Paul Bourke: Newton–Raphson Fractals — gallery and mathematical background

- SciPython: The Newton Fractal — Python implementation

4.4.2 Implications for Optimization

Takeaway for optimization. Since Newton’s method for \min_x f(x) is Newton–Raphson for \nabla f(x) = 0, all three pathologies carry over. When f is nonconvex and has multiple stationary points, the stationary point Newton converges to is sensitive to initialization — and the basins of attraction can have fractal boundaries. Even when f is convex, the pure Newton step d^k = -[\nabla^2 f(x^k)]^{-1}\nabla f(x^k) can be too large, causing the method to overshoot. The remedy is to introduce a damped Newton step:

x^{k+1} = x^k - \alpha^k \cdot \underbrace{\bigl(\nabla^2 f(x^k)\bigr)^{-1} \nabla f(x^k)}_{d^k}, \tag{4.1}

where \alpha^k is chosen by backtracking line search: starting from \alpha = 1, repeatedly shrink \alpha \leftarrow \beta \alpha (with \beta \in (0,1)) until the Armijo condition f(x + \alpha d) \leq f(x) + c \cdot \alpha \cdot d^\top \nabla f(x) is satisfied (with c \in (0, 1/2)). This guarantees f(x^{k+1}) < f(x^k) at every step, preventing divergence while preserving fast convergence near the optimum.

4.5 Newton Decrement

With the damped Newton update (4.1) and backtracking line search in hand, we need a principled stopping criterion and a way to measure progress. The Newton decrement serves both roles. Let d_{\text{nt}}(x) = -[\nabla^2 f(x)]^{-1} \nabla f(x) denote the Newton direction.

Definition 4.2 (Newton Decrement) The Newton decrement at point x is:

\lambda(x) = \sqrt{\nabla f(x)^\top \bigl(\nabla^2 f(x)\bigr)^{-1} \nabla f(x)} = \sqrt{d_{\text{nt}}(x)^\top \nabla^2 f(x)\, d_{\text{nt}}(x)}.

The Newton decrement has three useful interpretations:

Rate of decrease. The directional derivative along d_{\text{nt}} is \langle \nabla f(x), d_{\text{nt}}(x) \rangle = -\lambda(x)^2, so \lambda(x)^2 measures how fast f decreases in the Newton direction.

Surrogate for the optimality gap. The gap between f(x) and the minimum of the local quadratic model is f(x) - \inf_y \widehat{f}_x(y) = \frac{1}{2}\lambda(x)^2, so \frac{1}{2}\lambda(x)^2 approximates f(x) - p^*.

Steepest descent in the Hessian norm. Since \|u\|_{\nabla^2 f(x)} = \sqrt{u^\top \nabla^2 f(x)\, u} is a norm when \nabla^2 f(x) \succ 0, the Newton direction is the steepest descent direction under this local norm. This explains why Newton’s method adapts to the geometry of f: it uses the curvature-adapted norm rather than the Euclidean norm.

Affine invariance. A key advantage of Newton’s method over gradient descent is that \lambda(x) is invariant under invertible affine transformations. If we reparameterize via \bar{f}(y) = f(Ty) for invertible T, a direct computation shows d_{\text{nt},\bar{f}}(y) = T^{-1} d_{\text{nt},f}(Ty) and \lambda_{\bar{f}}(y) = \lambda_f(Ty). The Newton iterates in the new coordinates are exactly the transformed iterates in the old coordinates — Newton’s method is coordinate-free. Gradient descent, by contrast, is highly sensitive to coordinate scaling (ill-conditioning).

4.6 Convergence Analysis: Quadratic Convergence

Having defined the Newton decrement as a measure of progress, we are now ready to state and prove the main convergence result. Newton’s method features quadratic convergence:

\|x^{k+1} - x^*\| \leq M \cdot \|x^k - x^*\|^2,

when k is sufficiently large.

4.6.1 Rates of Convergence

Before stating the convergence theorem, we recall the standard taxonomy of convergence rates. These rates describe how quickly the error sequence \{e_k\} vanishes.

Definition 4.3 (Rates of Convergence) Let \{e_k\}_{k \geq 1} be a sequence with \lim_{k \to \infty} e_k = 0. Define:

\delta_L = \lim_{k \to \infty} \frac{e_{k+1}}{e_k}, \qquad \delta_Q = \lim_{k \to \infty} \frac{e_{k+1}}{(e_k)^2}.

- Linear convergence \iff 0 < \delta_L < 1.

- e.g., e_k = C \cdot q^k, q \in (0,1). Error decays exponentially.

- Superlinear convergence \iff \delta_L = 0.

- e.g., e_k = \frac{C}{k!} or e_k = \frac{C}{k^k}. Faster than \exp(-k).

- Sublinear convergence \iff \delta_L = 1.

- e.g., e_k = \frac{C}{\sqrt{k}}, e_k = C \cdot k^{-\alpha} (\alpha > 0). Slower than \exp(-k).

- Quadratic convergence \iff \delta_Q \in (0,1).

- e.g., e_k = q^{2^k}. Error decays double exponentially.

4.6.2 Quadratic Convergence of Newton–Raphson (1-D)

Let us first focus on Newton–Raphson (1-D) to gain intuition. The following theorem shows that when the initial point is close enough to a simple root, the error squares at each iteration — a hallmark of quadratic convergence.

The key idea. Newton–Raphson replaces F with its tangent line and finds the root of that line. Near a simple root, the tangent line is an excellent approximation of F, and the error in this approximation is proportional to (e^k)^2 (the quadratic term in the Taylor expansion). So each step converts the current error e^k into a squared error — and squaring a small number makes it much smaller.

Theorem 4.1 (Quadratic Convergence of Newton–Raphson) Let x^* be a root of F with F'(x^*) \neq 0 (a simple root), and suppose |F''(x)| \leq M in a neighborhood of x^*. Define the constant C = M / |F'(x^*)| and the radius \delta = 1/(2C). Then for any x^0 \in [x^* - \delta,\, x^* + \delta], Newton–Raphson converges to x^* with:

|x^{k+1} - x^*| \leq C \cdot |x^k - x^*|^2.

In particular, setting e^k = |x^k - x^*|:

e^k \leq \frac{1}{C}\Bigl(\frac{1}{2}\Bigr)^{2^k},

so the number of correct digits roughly doubles at each step.

The proof proceeds by Taylor-expanding F around x^k and bounding the resulting error recurrence. The strategy is: (1) subtract the fixed-point relation from the update to isolate e^{k+1}, and (2) use the Taylor remainder to bound it.

Proof. Step 1: Error recurrence. The Newton–Raphson update is x^{k+1} = x^k - F(x^k)/F'(x^k), and x^* is a fixed point since F(x^*) = 0. Define the error e^k = x^k - x^*. Taylor-expanding F(x^*) around x^k:

0 = F(x^*) = F(x^k - e^k) = F(x^k) - e^k \cdot F'(x^k) + \frac{(e^k)^2}{2} F''(\xi^k), \tag{4.2}

for some \xi^k between x^k and x^* (by the mean value form of the remainder).

Step 2: Isolate e^{k+1}. Rearranging Equation 4.2 and dividing by F'(x^k):

e^k - \frac{F(x^k)}{F'(x^k)} = \frac{F''(\xi^k)}{2\,F'(x^k)} \cdot (e^k)^2.

The left-hand side is exactly x^k - x^* - (x^{k+1} - x^k) = x^{k+1} - x^* = e^{k+1} (with appropriate sign). We need F'(x^k) \neq 0; this holds because |F'(x^k)| \geq |F'(x^*)| - M\delta > |F'(x^*)|/2 > 0 when \delta < |F'(x^*)|/(2M).

Step 3: Bound. Taking absolute values:

|e^{k+1}| \leq \frac{M}{2\,|F'(x^k)|} \cdot (e^k)^2 \leq \frac{M}{|F'(x^*)|} \cdot (e^k)^2 = C \cdot (e^k)^2.

Since C \cdot e^0 \leq C \cdot \delta = 1/2 < 1, the sequence \{C \cdot e^k\} satisfies C \cdot e^{k+1} \leq (C \cdot e^k)^2, giving C \cdot e^k \leq (1/2)^{2^k}. \blacksquare

An alternative viewpoint: define g(x) = x - F(x)/F'(x). Since g'(x^*) = 0 (because F(x^*) = 0), Taylor expansion gives g(x) - g(x^*) \approx C(x - x^*)^2, which is exactly the quadratic convergence relation e^{k+1} \approx C(e^k)^2.

When quadratic convergence fails.

Quadratic convergence requires F'(x^*) \neq 0 (a simple root). When F'(x^*) = 0 (a multiple root), the constant C = M/|F'(x^*)| blows up and we lose the quadratic rate. The method still converges, but only linearly:

Example 4.2 (Linear Convergence at a Multiple Root) F(x) = x^2 has a double root at x^* = 0 with F'(0) = 0. Then g(x) = x - F(x)/F'(x) = x/2, giving:

(x^{k+1} - 0) = \frac{1}{2}(x^k - 0). \quad \text{(Linear convergence with rate $1/2$.)}

Takeaway from the 1-D analysis. Newton–Raphson converges quadratically near simple roots, but only linearly near multiple roots. The two conditions for quadratic convergence — F'(x^*) \neq 0 (non-degeneracy) and |F''| bounded (smoothness) — will reappear in the multivariate setting as strong convexity and Lipschitz Hessian.

4.6.3 Convergence Analysis of Newton’s Method

The 1-D analysis above reveals the essential mechanism: the error squares at each step when we start close enough to the root. We now extend this to the multivariate setting, where the damped Newton method (4.1) exhibits a characteristic two-phase convergence. We assume throughout that f is “nice”:

- Strongly convex and smooth: m I_n \preceq \nabla^2 f(x) \preceq M I_n for all x.

- Lipschitz Hessian: \|\nabla^2 f(x) - \nabla^2 f(y)\| \leq L \|x - y\|_2.

Condition 1 plays the role of “F'(x^*) \neq 0” from the 1-D case (it ensures the Newton step is well-defined and bounded). Condition 2 plays the role of “F'' bounded” (it controls how well the quadratic model approximates f).

Why two phases? The 1-D theorem required starting in a neighborhood of x^*. But what if we start far away? A full Newton step (\alpha = 1) could increase f — the quadratic model is only a local approximation, and far from x^* we cannot trust it globally. The solution: use damped steps (small \alpha) while far away, then switch to full steps (\alpha = 1) once we are close enough.

Phase I (damped Newton, far from optimum): The gradient is large (\|\nabla f(x^k)\| \geq \eta), so we are far from x^*. We use a conservative step size \alpha = m/M. Newton gives us a good direction, but we don’t trust the full step size. Each step decreases f by at least a constant \gamma — similar to gradient descent, but with a curvature-adapted direction. This phase lasts at most (f(x^0) - p^*)/\gamma steps.

Phase II (pure Newton, near optimum): The gradient is small (\|\nabla f(x^k)\| < \eta), so the quadratic model is an excellent approximation. Full Newton steps (\alpha = 1) are accepted by backtracking, and the error squares at each step — just like in the 1-D case. This phase needs only \log\log(1/\varepsilon) additional steps to reach \varepsilon accuracy.

Theorem 4.2 (Convergence Theory (Newton + Backtracking)) Under assumptions 1–2, there exist \eta = m^2/L and \gamma = \frac{m\eta^2}{2M^2} > 0 such that:

(1) Phase I (damped steps). If \|\nabla f(x^k)\|_2 \geq \eta, then stepsize \alpha^k = m/M gives:

f(x^{k+1}) - f(x^k) \leq -\gamma. \quad \text{(constant decrease per step)} \tag{4.3}

(2) Phase II (pure Newton steps). If \|\nabla f(x^k)\|_2 < \eta, then stepsize \alpha^k = 1 is accepted by backtracking, and:

\frac{L}{2m^2}\|\nabla f(x^{k+1})\|_2 \leq \Bigl(\frac{L}{2m^2}\|\nabla f(x^k)\|_2\Bigr)^2. \quad \text{(quadratic convergence)} \tag{4.4}

Total iteration complexity to reach f(x^k) - p^* \leq \varepsilon:

K(\varepsilon) = \underbrace{\frac{f(x^0) - p^*}{\gamma}}_{\text{Phase I (linear)}} + \underbrace{\log\log\bigl(2m^2/(L^2\varepsilon)\bigr)}_{\text{Phase II (quadratic)}}.

How to read Figure 4.7. The figure shows the suboptimality gap f(x^k) - p^* on a log scale versus iterations. In Phase I (left portion), the method uses damped steps and the gap decreases linearly (constant progress per step). Once \|\nabla f(x^k)\| drops below the threshold \eta = m^2/L, the method enters Phase II (right portion): pure Newton steps with \alpha = 1 yield quadratic convergence — the number of correct digits roughly doubles each iteration, appearing as a steep plunge on the log scale.

Unpacking Phase II: double-exponential convergence. Once \|\nabla f(x^k)\|_2 < \eta at some iteration k, the method stays in Phase II (the bound (4.4) implies \|\nabla f(x^{k+1})\| < \eta). Setting a_\ell = \frac{L}{2m^2}\|\nabla f(x^\ell)\|_2, the recursion a_\ell \leq a_{\ell-1}^2 with a_k \leq 1/2 gives

\|\nabla f(x^\ell)\|_2 \leq \frac{2m^2}{L} \cdot \Bigl(\frac{1}{2}\Bigr)^{2^{\ell-k}}.

This is double-exponential decay: the number of correct digits roughly doubles at each step. Using strong convexity, we can translate this into other convergence measures: \|x^k - x^*\| \leq \frac{1}{m}\|\nabla f(x^k)\| (by the mean value theorem) and f(x^k) - p^* \leq \frac{1}{2m}\|\nabla f(x^k)\|^2 (by gradient domination, Section 4.9.1).

NoteInteractive Demo: Newton vs. Gradient Descent (1-D)

Compare Newton’s method (green) and gradient descent (blue) on f(x) = e^x + e^{-x} (minimizer at x^* = 0). Newton converges in very few steps; gradient descent progresses linearly.

4.6.3.1 Analysis of Phase I

Setup: \|\nabla f(x)\|_2 \geq \eta (we are far from the optimum).

Goal: Show that each damped Newton step decreases f by at least a constant \gamma > 0.

Strategy. The argument has three parts: (1) bound how much f decreases along the Newton direction as a function of \alpha and \lambda(x); (2) choose the step size \alpha = m/M; (3) show that \lambda(x) is bounded below by a constant (because \|\nabla f\| is large). Combining these gives the constant decrease.

Step 1: Decrease along the Newton direction. Since \nabla^2 f \preceq M \cdot I, by Taylor expansion:

f(x + \alpha\, d_{\text{nt}}(x)) \leq f(x) + \alpha\, d_{\text{nt}}(x)^\top \nabla f(x) + \frac{\alpha^2 M}{2} \|d_{\text{nt}}(x)\|_2^2. \tag{4.5}

Since \nabla^2 f \succeq m \cdot I:

\|d_{\text{nt}}(x)\|_2^2 \leq \frac{1}{m}\, d_{\text{nt}}(x)^\top \nabla^2 f(x)\, d_{\text{nt}}(x) = \frac{1}{m}\bigl(\lambda(x)\bigr)^2. \tag{4.6}

Also:

\bigl(\lambda(x)\bigr)^2 = \nabla f(x)^\top \bigl(\nabla^2 f(x)\bigr)^{-1}\nabla f(x) = -d_{\text{nt}}(x)^\top \nabla f(x). \tag{4.7}

Combining (4.5), (4.6), and (4.7):

f(x + \alpha\, d_{\text{nt}}(x)) \leq f(x) + \Bigl(-\alpha + \frac{M\alpha^2}{2m}\Bigr)\bigl(\lambda(x)\bigr)^2.

Step 2: Choose the step size. Setting \alpha^* = m/M (this minimizes the upper bound above; it is the ratio of strong convexity to smoothness, a natural “trust radius”):

f(x + \alpha^* d_{\text{nt}}(x)) \leq f(x) - \frac{m}{2M}\bigl(\lambda(x)\bigr)^2 = f(x) - \frac{\alpha^*}{2}\bigl(\lambda(x)\bigr)^2.

Step 3: Lower bound on \lambda(x). We need the decrease \frac{\alpha^*}{2}\lambda(x)^2 to be at least a constant. Since \|\nabla f(x)\| is large, we can bound \lambda(x) from below:

\bigl(\lambda(x)\bigr)^2 = \nabla f(x)^\top \bigl(\nabla^2 f(x)\bigr)^{-1} \nabla f(x) \geq \lambda_{\min}\bigl((\nabla^2 f(x))^{-1}\bigr) \|\nabla f(x)\|_2^2 \geq \frac{1}{M}\|\nabla f(x)\|_2^2.

Therefore:

f(x^{k+1}) - f(x^k) \leq -\frac{\alpha^*}{2} \cdot \frac{1}{M}\|\nabla f(x^k)\|_2^2 \leq -\frac{m}{2M^2}\eta^2.

Conclusion. Whenever \|\nabla f(x^k)\| \geq \eta, the damped Newton step with \alpha^* = m/M decreases f by at least \gamma = \frac{m\eta^2}{2M^2}. Since f is bounded below by p^*, Phase I can last at most (f(x^0) - p^*)/\gamma iterations before \|\nabla f(x^k)\| drops below \eta.

4.6.3.2 Analysis of Phase II

Setup: \|\nabla f(x)\|_2 < \eta = m^2/L (we are near the optimum).

Goal: Show that the pure Newton step (\alpha = 1) achieves quadratic convergence: the gradient norm after one step is bounded by the square of the gradient norm before.

The key idea is identical to the 1-D proof: the Newton step makes \nabla f(x^{k+1}) exactly zero if f were truly quadratic. The residual \|\nabla f(x^{k+1})\| comes entirely from the error in the quadratic approximation — the “cubic” remainder term — which is controlled by the Hessian Lipschitz constant L.

Step 1: The Newton step kills the quadratic part of \nabla f. By the definition of the Newton step, \nabla f(x) + \nabla^2 f(x) \, d_{\text{nt}}(x) = 0, so the gradient at the new point is:

\nabla f(x + d_{\text{nt}}(x)) = \underbrace{\nabla f(x + d_{\text{nt}}(x)) - \nabla f(x) - \nabla^2 f(x)\, d_{\text{nt}}(x)}_{\text{error from Hessian variation along the step}}.

Step 2: Bound the Hessian variation using the integral form. Writing the difference as an integral and applying the Lipschitz condition:

\|\nabla f(x + d_{\text{nt}}(x))\|_2 = \biggl\|\int_0^1 \bigl(\nabla^2 f(x + t\, d_{\text{nt}}(x)) - \nabla^2 f(x)\bigr)\, d_{\text{nt}}(x)\, dt\biggr\|_2 \leq \frac{L}{2}\|d_{\text{nt}}(x)\|_2^2.

The inequality uses \|\nabla^2 f(x + td) - \nabla^2 f(x)\| \leq tL\|d\| and \int_0^1 t\,dt = 1/2. This is the multivariate analogue of the Taylor remainder bound |F''(\xi^k)| \leq M in the 1-D proof.

Step 3: Bound the Newton step size. Since \nabla^2 f \succeq mI:

\|d_{\text{nt}}(x)\|_2 = \|(\nabla^2 f(x))^{-1}\nabla f(x)\|_2 \leq \frac{1}{m}\|\nabla f(x)\|_2.

Step 4: Combine. Substituting Step 3 into Step 2:

\|\nabla f(x + d_{\text{nt}}(x))\|_2 \leq \frac{L}{2m^2}\|\nabla f(x)\|_2^2.

Dividing both sides by 2m^2/L and writing a_k = \frac{L}{2m^2}\|\nabla f(x^k)\|_2, this becomes the recurrence a_{k+1} \leq a_k^2 — the same squaring relation as in the 1-D case.

Step 5: Unroll the recurrence. At the start of Phase II (iteration k_0), \|\nabla f(x^{k_0})\| \leq \eta = m^2/L, so a_{k_0} \leq 1/2. Applying a_{k+1} \leq a_k^2 repeatedly:

\frac{L}{2m^2}\|\nabla f(x^k)\|_2 \leq \Bigl(\frac{1}{2}\Bigr)^{2^{k-k_0}}. \quad \text{(Quadratic convergence.)}

Conclusion. Once the gradient norm drops below \eta = m^2/L, pure Newton steps (\alpha = 1) produce double-exponential decay: the number of correct digits roughly doubles at each iteration. After just \ell steps in Phase II, the gradient norm shrinks by a factor of (1/2)^{2^\ell}. For example, 10 Phase II iterations give (1/2)^{1024} \approx 10^{-308} — essentially machine precision. This is why Phase II contributes only \log\log(1/\varepsilon) to the total iteration count.

4.6.3.3 Validity of Unit Stepsize in Phase II

Why this section is needed. The Phase II analysis above proved that if we take a full Newton step (\alpha = 1), the gradient norm squares. But in practice we use backtracking line search, which starts at \alpha = 1 and shrinks \alpha until it finds sufficient decrease. We need to verify that backtracking actually accepts \alpha = 1 — otherwise it would shrink the step size and we would not get quadratic convergence. The key condition is that \|\nabla f(x)\| \leq \eta = m^2/L: when the gradient is small enough, the Newton step is short enough that the cubic error is negligible, and \alpha = 1 gives sufficient decrease.

Technique: Bound derivatives of g(\alpha) = f(x + \alpha\, d_{\text{nt}}(x)).

By Lipschitz Hessian:

\|\nabla^2 f(x + \alpha\, d_{\text{nt}}(x)) - \nabla^2 f(x)\| \leq \alpha \cdot L \cdot \|d_{\text{nt}}(x)\|_2.

This implies:

\bigl|d_{\text{nt}}(x)^\top\bigl[\nabla^2 f(x + \alpha\, d_{\text{nt}}(x)) - \nabla^2 f(x)\bigr] d_{\text{nt}}(x)\bigr| \leq \alpha \cdot L \cdot \|d_{\text{nt}}(x)\|_2^3.

Note: (\lambda(x))^2 = d_{\text{nt}}(x)^\top \nabla^2 f(x)\, d_{\text{nt}}(x) \geq m \|d_{\text{nt}}(x)\|_2^2.

Let g(\alpha) = f(x + \alpha\, d_{\text{nt}}(x)). Then:

g(0) = f(x), \quad g'(0) = d_{\text{nt}}(x)^\top \nabla f(x) = -(\lambda(x))^2,

g''(\alpha) = d_{\text{nt}}(x)^\top \nabla^2 f(x + \alpha\, d_{\text{nt}}(x))\, d_{\text{nt}}(x), \quad g''(0) = (\lambda(x))^2.

By the Lipschitz bound:

g''(\alpha) \leq g''(0) + \alpha \cdot L \cdot \|d_{\text{nt}}(x)\|_2^3 \leq (\lambda(x))^2 + \frac{\alpha \cdot L}{m^{3/2}}(\lambda(x))^3.

Integrating this inequality:

g'(\alpha) = g'(0) + \int_0^\alpha g''(u)\, du \leq -(\lambda(x))^2 + \alpha \cdot (\lambda(x))^2 + \frac{\alpha^2 L}{2m^{3/2}}(\lambda(x))^3.

Integrating again:

g(\alpha) = g(0) + \int_0^\alpha g'(t)\, dt \leq f(x) - \alpha\,(\lambda(x))^2 + \frac{\alpha^2}{2}(\lambda(x))^2 + \frac{\alpha^3 L}{6m^{3/2}}(\lambda(x))^3.

= f(x) - (\lambda(x))^2 \Bigl[\alpha - \frac{\alpha^2}{2} - \frac{\alpha^3 L}{6m^{3/2}}\lambda(x)\Bigr].

We want to set \alpha = 1 and still get decrease. Note:

\lambda(x) \leq \sqrt{\lambda_{\min}((\nabla^2 f)^{-1})} \cdot \|\nabla f(x)\|_2 \leq \sqrt{1/m} \cdot \|\nabla f(x)\|_2 \leq \eta / \sqrt{m}.

When \eta \leq m^2/L, we have \lambda(x) \leq m^{3/2}/L, so:

\alpha - \frac{\alpha^2}{2} - \frac{\alpha^3 L}{6 m^{3/2}}\lambda(x) \geq 1 - \frac{1}{2} - \frac{1}{6} = \frac{1}{3}.

Thus:

g(1) = f(x + d_{\text{nt}}(x)) \leq f(x) - \frac{1}{3}(\lambda(x))^2.

Conclusion. When \|\nabla f(x)\| \leq \eta = m^2/L, the full Newton step (\alpha = 1) decreases f by at least \frac{1}{3}\lambda(x)^2. This is more than enough for the Armijo condition to accept \alpha = 1, so backtracking does not shrink the step size. Combined with the Phase II quadratic convergence analysis, this completes the proof of Theorem 4.2: Phase I makes constant progress with damped steps, Phase II achieves quadratic convergence with full steps, and the transition happens automatically when \|\nabla f\| drops below \eta.

4.7 Summary

4.7.1 Logic Flow of This Chapter

The central theme: Newton’s method for minimizing f is Newton–Raphson for solving \nabla f(x) = 0. This equivalence lets us develop both perspectives in parallel.

Two viewpoints, one algorithm. Minimizing the quadratic model of f (Viewpoint 1) and applying Newton–Raphson to \nabla f = 0 (Viewpoint 2) give the same update x^+ = x - [\nabla^2 f(x)]^{-1}\nabla f(x). Viewpoint 1 gives geometric intuition; Viewpoint 2 transfers the root-finding convergence theory directly to optimization.

What can go wrong? Newton–Raphson can converge, cycle, or diverge depending on initialization. These pathologies carry over to optimization. The remedy: damped Newton steps with backtracking line search.

The Newton decrement \lambda(x) measures progress in an affine-invariant way, serving as both a stopping criterion (\frac{1}{2}\lambda(x)^2 \approx f(x) - p^*) and a phase indicator.

Two-phase convergence. Phase I (damped, \|\nabla f\| \geq \eta): constant decrease per step. Phase II (pure Newton, \|\nabla f\| < \eta): quadratic convergence — the number of correct digits doubles each iteration.

4.7.2 Key Results

Phase II: Local Quadratic Convergence.

Requires:

- \|\nabla f(x)\| \leq \eta = m^2/L,

- \nabla^2 f Lipschitz,

- (\nabla^2 f(x))^{-1} bounded \iff \nabla^2 f(x) \succeq m I.

These conditions parallel those in the convergence analysis of Newton–Raphson:

| Newton–Raphson | Newton’s Method |

|---|---|

| Neighborhood [x^* - \delta,\, x^* + \delta] | \|\nabla f(x)\| \leq \eta |

| F'(x^*) \neq 0 | (\nabla^2 f)^{-1} bounded |

| F'' bounded norm | \nabla^2 f Lipschitz |

Phase I: Smoothness is only used in Phase I.

- Stepsize \alpha^* = m/M.

- If ill-conditioned (M/m large), the stepsize must decay.

4.8 Computational Cost and Practical Considerations

Newton’s method converges dramatically faster than gradient descent (quadratic vs. linear), but each iteration is more expensive. Computing the Newton step requires forming the Hessian (O(n^2) storage) and solving the linear system \nabla^2 f(x) d = \nabla f(x) (O(n^3) computation). When n is large, this can be prohibitive.

Several strategies mitigate this cost. If \nabla^2 f has special structure (sparse, banded, low-rank), the linear solve can be much cheaper. Quasi-Newton methods (such as L-BFGS) approximate [\nabla^2 f]^{-1} using gradient information alone, achieving superlinear convergence without ever forming the Hessian. Self-concordant functions provide an alternative theoretical framework where Newton’s method has strong convergence guarantees without explicitly assuming Lipschitz Hessians.

4.9 Appendix: Strong Convexity

Strong convexity is the key assumption underlying both the Phase I analysis (which uses the lower bound on the Hessian) and the convergence guarantees for Newton’s method. We collect the definition and main properties here for reference.

Definition 4.4 (Strong Convexity) A function f is m-strongly convex if f(x) - \frac{m}{2}\|x\|^2 is a convex function.

If f is twice differentiable, f is m-strongly convex if and only if \lambda_{\min}(\nabla^2 f(x)) \geq m for all x \in \mathbb{R}^n.

Properties of strong convexity:

- x^* is unique.

- f(y) \geq f(x) + \nabla f(x)^\top(y - x) + \frac{m}{2}\|y - x\|_2^2 (definition).

- (\nabla f(x) - \nabla f(y))^\top(x - y) \geq m \|x - y\|_2^2 (\nabla^2 f \succeq m I).

- \frac{1}{2}\|\nabla f(x)\|_2^2 \geq m \cdot (f(x) - f(x^*)) (gradient domination).

- f(y) \leq f(x) + \nabla f(x)^\top(y - x) + \frac{1}{2m}\|\nabla f(y) - \nabla f(x)\|_2^2.

4.9.1 Proof of Property 4

In Property 2, minimize over y \in \mathbb{R}^n. We have:

g(y) = f(x) + \nabla f(x)^\top(y - x) + \frac{m}{2}\|y - x\|^2,

which has minimizer y^* = x - \frac{1}{m}\nabla f(x). Thus:

f(x^*) = \min_y f(y) \geq \min_y g(y) = f(x) - \frac{1}{2m}\|\nabla f(x)\|_2^2.

That is:

\|\nabla f(x)\|_2^2 \geq 2m \cdot (f(x) - f(x^*)). \quad \blacksquare

4.9.2 Proof of Property 5

Consider another function:

\phi_x(z) = f(z) - \nabla f(x)^\top z.

Claim: \operatorname{argmin}_z \phi_x(z) = x (check first-order condition: \nabla \phi_x(x) = \nabla f(x) - \nabla f(x) = 0).

Since f is strongly convex, \phi_x is strongly convex. We verify:

(\nabla \phi_x(z_1) - \nabla \phi_x(z_2))^\top(z_1 - z_2) = (\nabla f(z_1) - \nabla f(z_2))^\top(z_1 - z_2) \geq m \|z_1 - z_2\|_2^2.

So \phi_x is strongly convex (Property 3).

Now:

f(y) - (f(x) + \nabla f(x)^\top(y - x))

= (f(y) + \nabla f(x)^\top y) - (f(x) + \nabla f(x)^\top x)

= \phi_x(y) - \phi_x(x)

= \phi_x(y) - \min_z \phi_x(z) \leq \frac{1}{2m}\|\nabla \phi_x(y)\|_2^2 = \frac{1}{2m}\|\nabla f(x) - \nabla f(y)\|_2^2. \quad \blacksquare

The last inequality uses Property 4 applied to \phi_x.

TipLooking Ahead

In the next chapter, we extend Newton’s method to constrained optimization via interior point methods. The key idea is to encode inequality constraints using a logarithmic barrier function, reducing the constrained problem to a sequence of smooth unconstrained (or equality-constrained) problems that can be solved with Newton’s method.